E-E-A-T for AI Search: The Framework That Decides Who Gets Cited

E-E-A-T for AI search works differently than Google rankings. See which authority signals earn AI citations with real data and a self-assessment rubric.

Tanush Yadav

March 13, 2026 · 13 min read

- How Does E-E-A-T Work Differently in AI Search vs Google?

- Why Do Lower-Ranking Pages Get More AI Citations Than Top Results?

- What Is Entity Authority and Why Does It Matter More Than Page Authority?

- How Does First-Party Data Create an AI Citation Advantage?

- How Do ChatGPT, Perplexity, and AI Overviews Weigh E-E-A-T Differently?

- E-E-A-T Self-Assessment Rubric for AI Search

- Frequently Asked Questions

AI Overview citations from top-10 Google pages dropped from 76% to 38% in the past year. Rankings and citations decoupled.

What got you ranking on Google won't automatically earn AI citations. E-E-A-T for AI search still dictates who gets cited, but the triggers are different now. These AI engines are looking for consensus across the web. They cross-check facts against dozens of sources and gravitate toward brands they recognize as genuine authorities (not just keyword-optimized pages).

This guide breaks down every E-E-A-T pillar, showing exactly how AI citation selection diverges from Google ranking. We built a self-assessment rubric from live campaign data so you can audit your site today. Hamming.ai grew 8.5x in organic traffic after we shifted from Google ranking tactics to AI citation signals. Real AI visibility demands a different authority-building playbook.

TLDR: The Shift in E-E-A-T Optimization

- Google's top 10 pages used to get 76% of AI citations. That number dropped to 38%. Ranking well on Google no longer guarantees AI citations.

- AI models don't look at your URL's link profile. They check whether your brand shows up consistently across the internet, measuring entity-level consensus.

- Pages with original data tables get cited 4.1x more than pages recycling third-party stats. Your own research is the strongest asset you have.

- ChatGPT, Perplexity, and AI Overviews each weigh authority signals differently. One-size-fits-all optimization doesn't work anymore.

- Brand search volume now carries a 0.334 correlation with LLM citations. People searching for your company by name actually helps your AI visibility.

- Here's the kicker: pages at positions #6-10 with solid E-E-A-T signals get 2.3x more AI citations than the #1 page with weak signals.

How Does E-E-A-T Work Differently in AI Search vs Google?

Here's the short version: AI models care about whether your brand shows up consistently across the web with accurate, dense information. They don't really look at your backlink count or your author bio page.

Think about how you've been doing SEO. You point backlinks at one URL. You add an author bio at the bottom of the post. You put reviews on the product page. None of that registers with AI models. They're checking whether your brand shows up the same way across dozens of different websites.

| E-E-A-T Pillar | Google Signals | AI Citation Signals |

|---|---|---|

| Experience | User reviews, testimonials, UGC | First-party data, original research, practitioner methodology |

| Expertise | Author credentials, topical depth, author pages | Entity recognition across training data, cross-source consistency |

| Authoritativeness | Backlinks, domain authority, referring domains | Brand mention frequency, citation in training sources |

| Trustworthiness | HTTPS, review signals, site age | Factual accuracy, source diversity, claim recency |

Here's a number that should get your attention: brand search volume has a 0.334 correlation with LLM citations according to Omniscient Digital. That's the strongest single predictor they found. When people Google your company by name, AI models notice. They connect your brand to specific topics. And unlike search engines (which counted links as votes), AI models count consistent brand mentions as votes.

Adding schema markup for AI visibility helps models parse your facts quickly. A broader generative engine optimization strategy covers the rest. Old-school E-E-A-T cares about individual pages and authors. The AI version? It's all about entities and brands. We see this play out in our own analytics every week. Pages sitting at positions #6 through #10 regularly steal AI citations from the #1 result.

Why Do Lower-Ranking Pages Get More AI Citations Than Top Results?

Pages ranking #6-10 with strong E-E-A-T signals earn 2.3x more AI citations than #1 pages with weak authority signals.

Research from ZipTie.dev backs this up. Here's why it happens: Google ranks pages in a linear order based on links and domain age. AI models throw that list away. They look at whether a source has dense, accurate facts and whether multiple other sites confirm what it says. The page with the best information wins, regardless of where Google puts it.

That's why the old playbook is broken. Some legacy domain might sit at #1 on Google because it's been collecting links for a decade. But the content on that page is thin. An AI model scrolls past it, spots a page at position #8 that's packed with unique data and strong entity signals, and cites that one instead. Answer quality is what matters. Link profiles are irrelevant.

We helped UV Blocker take advantage of this exact mechanism. They had zero AI footprint before we started. Within months, ChatGPT cited them for sun protection queries. We didn't chase the #1 spot on Google. Instead, we published original product data and secured brand mentions across health forums. UV Blocker doubled their weekly orders and went from 0 to 38K clicks in six months.

It all comes down to entity authority versus page authority. E-E-A-T for AI search requires internalizing that distinction. Most marketing teams miss it, buying links while AI models hunt for entities.

What Is Entity Authority and Why Does It Matter More Than Page Authority?

Entity authority tracks how well the internet recognizes your brand across every source. Page authority just counts the links pointing at one URL.

With traditional E-E-A-T, everything happens at the page level. You count backlinks to a specific blog post. You make sure the author byline links to a credential page. You embed some user reviews. Everything is measured URL by URL.

AI models think at the entity level. The questions they're asking are completely different. Does the internet talk about this brand the same way in multiple places? Do independent websites agree on what this company actually does? Has the training data seen this organization enough to consider it an expert? Without that kind of web-wide consensus, a single well-optimized URL won't cut it.

Look at our work with Hamming.ai. Today, 40% of their product demos come from Reddit or AI search. We got there by building their entity presence across AI training sources and targeted subreddits. We hammered the same brand message across every platform they touched. After a few months, models began treating Hamming.ai as a primary source for their category. Their AI visibility took off. No link building campaigns. Just a footprint that AI couldn't ignore.

Here is how to build entity authority effectively:

- Maintain consistent NAP (Name, Address, Phone) data everywhere.

- Get your brand mentioned (without links) in editorial content from authoritative publications.

- Create a Wikipedia or Wikidata entry if you don't have one. AI models check these.

- Make sure your product descriptions say the same thing on every platform you're listed on.

- Publish original research under your brand name. This is the single highest-value activity for entity authority.

But one signal matters more than the rest when building entity authority. Original experience data.

How Does First-Party Data Create an AI Citation Advantage?

Original data tables earn 4.1x more AI citations than pages rehashing aggregated third-party statistics. AI models prioritize unique information they can't find elsewhere.

The same ZipTie.dev research that uncovered the 2.3x lower-ranking advantage found this pattern too. Think about it from the model's perspective. It's read millions of pages quoting the same recycled industry stats. When your article shows up with data nobody else has, you've given it something genuinely new. The model needs your specific numbers to give a complete answer, so it cites you.

And that creates the Experience moat. Your competitors can use AI to generate content that looks authoritative. Bullet lists, confident tone, the whole package. What they can't fake is the underlying data. Nobody can generate real client results with a prompt. Nobody can fabricate survey data from actual users or invent proprietary benchmark numbers that hold up to scrutiny.

Content rooted in human experience wins this space. The key is formatting that experience so machines can extract it cleanly. When you publish measured outcomes from real campaigns, the algorithm has no choice but to cite you. Your data isn't just content anymore. It's the foundation of your brand's authority in AI search.

Practical examples of first-party data that earn citations:

- Original benchmark studies with large, verifiable sample sizes.

- Survey data collected from your actual users (not third-party panel data).

- A/B test results with real statistical significance, not just "we saw improvement."

- Client case studies with named companies and specific metrics (like the Hamming.ai and UV Blocker examples above).

- Proprietary frameworks or methodologies your team developed and uses internally.

Any of these will move the needle. But here's what most people miss: the way E-E-A-T for AI search gets weighted depends entirely on which platform you're trying to show up in.

How Do ChatGPT, Perplexity, and AI Overviews Weigh E-E-A-T Differently?

The three major platforms grade authority signals differently. ChatGPT cares most about entity recognition. Perplexity wants recency and diverse sourcing. AI Overviews look for factual density above all else.

You can't treat all AI search engines as one thing. They run on different architectures and prioritize different authority signals based on how they're built and trained.

| Signal | ChatGPT | Perplexity | AI Overviews |

|---|---|---|---|

| Entity recognition | High weight | Medium | Medium |

| Source recency | Low-Medium | High | High |

| Factual density | Medium | Medium | High |

| Cross-source consistency | High | Medium | Medium |

| Brand mention frequency | High | Low-Medium | Medium |

ChatGPT leans on its training data, and it doesn't update that data in real-time. So brand recognition is king. If OpenAI's model has seen your company mentioned across hundreds of sources during training, it trusts you. If your brand barely shows up in those datasets, you're invisible to ChatGPT no matter how good your content is.

Perplexity is a different animal. It searches the live web, so what you published yesterday matters more than what you published in 2022. Drop a data-dense report today and Perplexity might cite it by tomorrow. For this platform, being fast and accurate beats being famous.

Google's AI Overviews are the most data-hungry of the three. They want content that answers the query with hard numbers, not opinions. Clean structured data and proper schema help you get included. It's more of an answer engine than a conversation partner, pulling exact numbers straight from your page.

Knowing what each platform values is step one. The best AI visibility tools help you track these metrics across platforms.

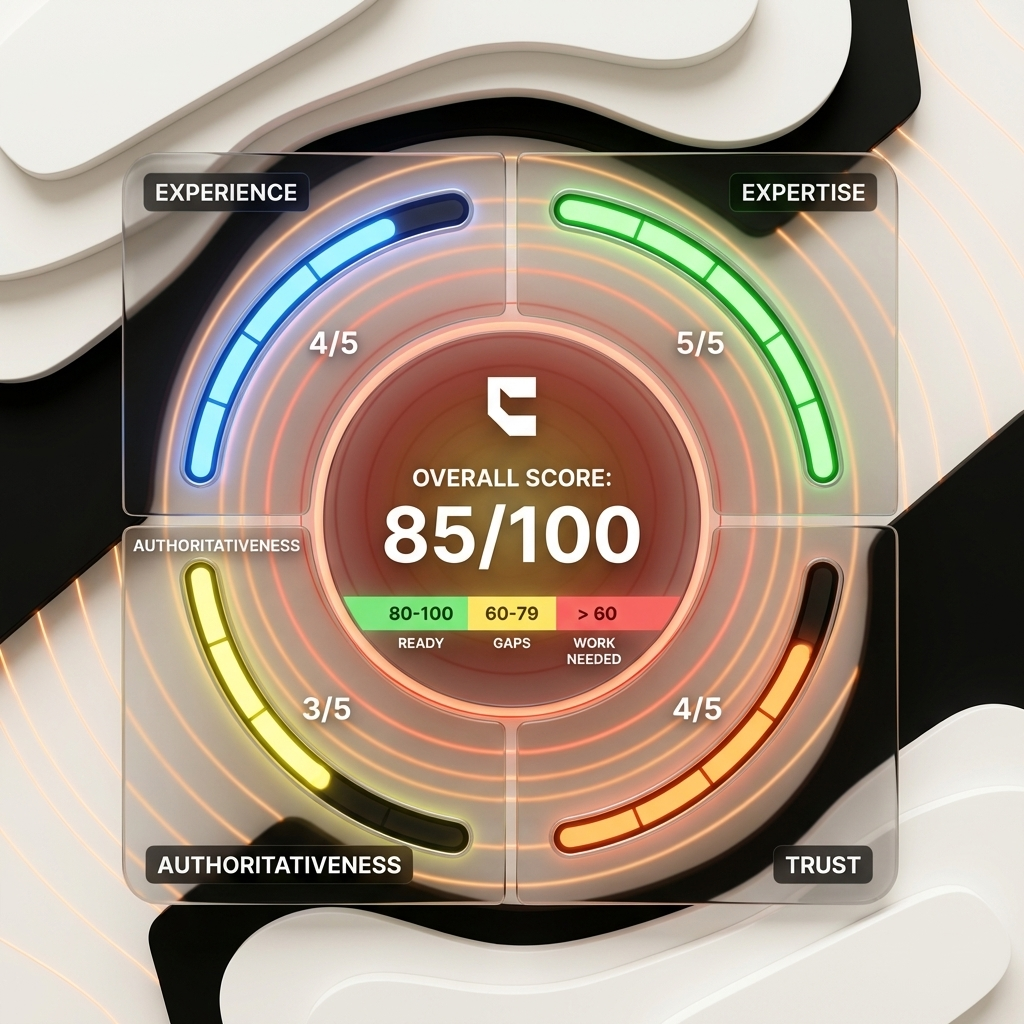

E-E-A-T Self-Assessment Rubric for AI Search

Score each E-E-A-T pillar on five specific indicators to find gaps between your Google authority and AI citation readiness.

Applying E-E-A-T for AI search starts with an honest self-assessment. We run this scoring framework for every new client to diagnose citation gaps. Grade your own site from 1 to 5 on each indicator below.

Experience Score (Out of 25):

- First-party data published on site (original research, surveys, case studies)

- Practitioner methodology documented (actual frameworks, not just advice)

- Real customer outcomes with specific metrics

- Original data tables or visualizations

- User-generated content and active community engagement

Expertise Score (Out of 25):

- Entity recognition (does searching your brand in ChatGPT return accurate info?)

- Google your brand name. Do at least 5 different sites describe what you do in the same way?

- Look at your content map. Are you covering the full topic cluster, or only the obvious head terms?

- Do your writers have a presence outside your own blog? Guest posts, podcasts, speaking gigs?

- When's the last time someone with real domain knowledge fact-checked your content?

Authoritativeness Score (Out of 25):

- Is your brand search volume going up, flat, or declining? Check Google Trends.

- Are journalists and bloggers mentioning you without being asked (and without a link)?

- Has your company been cited in any industry reports or academic papers?

- Do you have a Wikipedia or Wikidata entry? AI models reference these heavily.

- Does your brand messaging stay consistent across your website, social profiles, and third-party listings?

Trustworthiness Score (Out of 25):

- Factual claims cite specific sources with exact dates

- Content updated within the last 6 months

- Multiple content formats utilized (text, data, video, tools)

- No contradictory claims across your own published content

- Transparent methodology disclosure for all data

Add up your scores. Landing between 80 and 100? You're in good shape for AI citations. Between 60 and 79, there are gaps worth closing. Below 60 means you've got real work ahead. Want to go deeper? Measure your AI visibility quantitatively to validate the self-assessment. Every point you gain here shows up in your AI visibility ROI.

Frequently Asked Questions About E-E-A-T for AI Search

We hear these questions constantly from SEO teams trying to figure out how their existing authority work translates to AI citations.

Does E-E-A-T matter more for AI search than Google?

E-E-A-T matters equally for both, but runs on different mechanisms. Google measures page-level signals. AI models track entity-level signals across the internet. Same principles, different evidence. Focus on cross-source consistency and original data instead of chasing backlinks and formatting author bios.

Can AI-generated content demonstrate E-E-A-T?

Sure, AI text can look like expertise. But it can't run an actual survey, manage a real client campaign, or produce proprietary data from scratch. There's a reason original data tables earn 4.1x more citations. The Experience pillar specifically rewards content from people who've actually done the work.

How often should E-E-A-T content be updated for AI search?

Refresh your primary authority pages quarterly with new data and updated statistics. ChatGPT updates its training data slowly, but Perplexity indexes the live web in real-time. That split makes recency critical for capturing Perplexity citations specifically.

Why are some sites cited by AI but not ranking well on Google?

AI models prioritize entity authority and factual density over traditional ranking factors. Pages at positions #6-10 with strong E-E-A-T signals beat #1 pages by 2.3x in citations. Rankings and citations have decoupled. You need a separate optimization strategy for each channel.

Conclusion

The rules of authority have shifted. Here's what matters now:

- Rankings and citations decoupled. The citation rate from top-10 pages dropped from 76% to 38%.

- Entity over page. E-E-A-T for AI search operates at the entity level, not the page level.

- Data is your moat. Original data provides a 4.1x citation advantage over aggregated statistics.

- Platform differences exist. Each AI platform weighs authority signals differently. Optimize per platform.

Run the self-assessment rubric on your top five pages today. Any pillar scoring below a 3 needs attention before competitors fill the gap.

We help brands build the authority signals that earn AI citations. See our approach and pricing.