AI Content Audit: 7-Point Scorecard to Get Cited by AI

An AI content audit finds why AI won't cite your pages. Use our 7-point citability scorecard, prioritization framework, and real before/after data to fix it.

Tanush Yadav

March 18, 2026 · 12 min read

- What Is an AI Content Audit and How Is It Different From a Traditional SEO Audit?

- Why Isn't Your Existing Content Getting Cited by AI?

- Which Pages Should You Audit First?

- The 7-Point AI Citability Scorecard

- How Do You Audit a Page Step by Step?

- What Content Fixes Drive the Most AI Citations?

- How Do You Measure the Impact of Your AI Content Audit?

- Frequently Asked Questions About AI Content Audits

TL;DR: 80% of AI visibility wins come from fixing existing pages, not writing new ones. This guide gives you Cintra's 7-point citability scorecard to score any page, a prioritization matrix for which pages to audit first, and the specific fixes that move citation metrics.

76.4% of ChatGPT's top-cited pages were updated within the last 30 days. Your content from last year? AI is already ignoring it. Most brands have 50 to 200 pages sitting idle. They rank on Google. ChatGPT never cites them. The fix isn't creating new content. It's auditing what you already have.

We see this scenario daily. When we audit client content libraries, 80% of the AI visibility wins come from fixing existing pages, not writing new ones. A large repository of Google-optimized articles provides zero value in the generative search era if LLMs can't extract answers. Your audience asks Perplexity for software recommendations. They ask ChatGPT for strategic advice. Your pages don't appear.

This guide gives you the exact audit process we use at Cintra — our 7-point citability scorecard, a prioritization framework for which pages to fix first, and real before/after data showing what moves citation metrics. For the broader strategic context, read about generative engine optimization.

What Is an AI Content Audit and How Is It Different From a Traditional SEO Audit?

An AI content audit evaluates whether AI search engines can extract, trust, and cite your content — not just whether it ranks on Google.

Traditional SEO audits measure different variables. They check technical health, keyword density, broken links, and backlink profiles. The goal is crawlability and link equity. An AI content audit measures citability — can ChatGPT, Perplexity, or Google AI Overviews pull a clear answer from your page? A technical SEO pass means nothing if the bot can't isolate your core entity.

The output looks different too. A standard SEO audit hands you a spreadsheet of 404 errors. When we deliver an AI content audit to clients, the output is a focused, scored action list — specific pages with structural flaws blocking citations, and exact instructions for how to rewrite headings and format data. Understanding what is AI visibility changes your entire approach to content maintenance.

Why Isn't Your Existing Content Getting Cited by AI?

Five structural problems block most pages from AI citations: hidden content, image-based data, missing answer capsules, weak entity clarity, and absent trust signals.

These issues make your best pages invisible to LLMs. We discover these roadblocks in almost every client audit. Here's what we find.

Content hidden behind tabs or accordions. AI crawlers can't always trigger JavaScript-rendered elements. We see this constantly on product comparison pages. If your best answer requires a click, the bot moves on.

Image-based tables instead of HTML. Pricing tables, spec sheets, and comparison charts saved as images are invisible to AI. Generative engines can't extract tabular data from a PNG. They look for clean HTML tables elsewhere.

Missing answer capsule in the first paragraph. This is the most fixable and most impactful problem. AI needs a 20-to-30-word direct answer right after a heading. If your section opens with context instead of a crisp answer, AI skips it for a competitor who leads with the facts.

Weak entity clarity. AI can't figure out what the page is definitively about. The topic is implied through clever copywriting but never stated in a machine-readable format — no schema, no first-paragraph definition.

No authorship or trust signals. No author name, no credentials, no cited sources. AI has no reason to trust this page over millions of others. Implement proper E-E-A-T for AI search to prove credibility. Schema markup correlates with a 22% citation lift across 300,000+ URLs analyzed by Semrush.

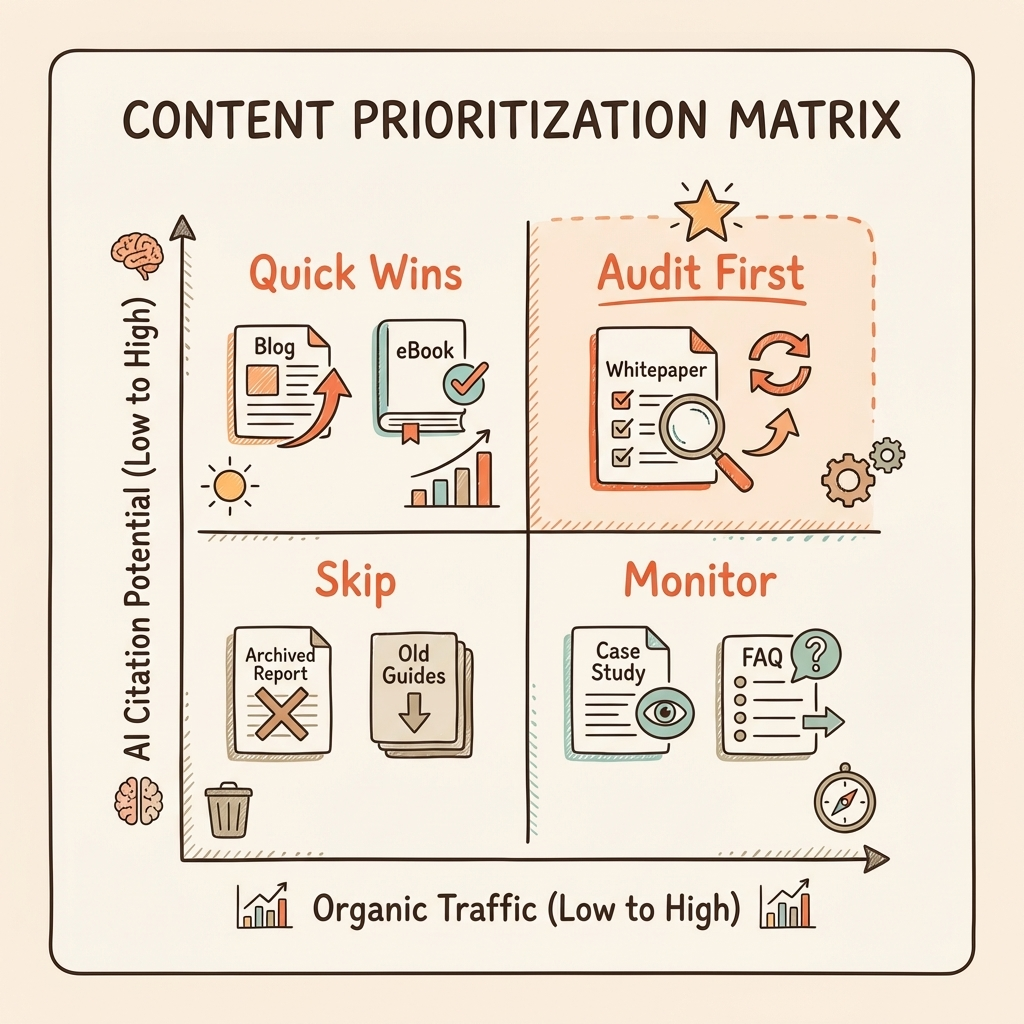

Which Pages Should You Audit First?

Prioritize pages by scoring four factors: existing organic traffic, conversion value, current AI citation status, and relevance to high-volume AI queries.

Not all pages deserve your time. You can't audit everything at once. A blog post generating 20 monthly visits shouldn't compete against a product page driving 2,000 visits. You need a system to rank your efforts.

| Factor | Weight | How to Score |

|---|---|---|

| Existing Organic Traffic | 25% | High (1,000+/mo), Medium (100-999), Low (<100) |

| Conversion Value | 25% | Product page > comparison page > informational post |

| Current AI Citation Status | 30% | Not cited = highest priority, partially cited = medium, fully cited = low |

| Topical Relevance to AI Queries | 20% | Does this page answer questions people actually ask AI? |

Score each factor from 1 to 3. Multiply by the weight. Stack rank pages by the total. A high-traffic comparison page that AI ignores entirely will land at the top of your list. Use the best AI visibility tools to check your current citation status across ChatGPT, Perplexity, and AI Overviews.

Freshness plays a major role here. Roughly 50% of Perplexity's citations come from content published or updated in the current year. Freshly audited and updated pages get priority from the engines. Start with your top ten.

The 7-Point AI Citability Scorecard

The scorecard evaluates seven factors that determine whether AI can cite your page: entity clarity, answer formatting, structure, data density, schema, authorship, and source authority.

This framework turns subjective quality checks into an objective score. Evaluate each page against these seven criteria. We use this rubric for every client evaluation.

| # | Factor | What AI Checks | Score 1 (Fail) | Score 3 (Partial) | Score 5 (Pass) |

|---|---|---|---|---|---|

| 1 | Entity Clarity | Can AI identify what this page is definitively about? | Topic unclear, no definition | Topic implied but not stated explicitly | Clear entity definition in first 100 words + schema |

| 2 | Answer Formatting | Does the first paragraph after each heading directly answer the question? | Starts with context, no direct answer | Partial answer, 40+ words before getting to the point | 15-30 word answer capsule immediately after heading |

| 3 | Content Structure | Is content chunked into self-contained, extractable sections? | Long paragraphs, no heading hierarchy | Some headings, but sections aren't self-contained | 50-150 word self-contained chunks under clear H2/H3 headings |

| 4 | Statistical Density | Does the page include verifiable data, quotes, research? | No data, opinion-based | 1-2 generic stats without sources | 3+ sourced statistics, named expert quotes, cited research |

| 5 | Schema Completeness | Is structured data present and accurate? | No schema | Article schema only | Article + Author + FAQ + Organization schema |

| 6 | Authorship Signals | Can AI identify who wrote this and why they're credible? | No author listed | Author name only | Author name + bio + credentials + linked profiles |

| 7 | Source Authority | Does the page cite external sources that AI already trusts? | No external references | Links to generic sources | Cites peer-reviewed research, industry reports, official docs |

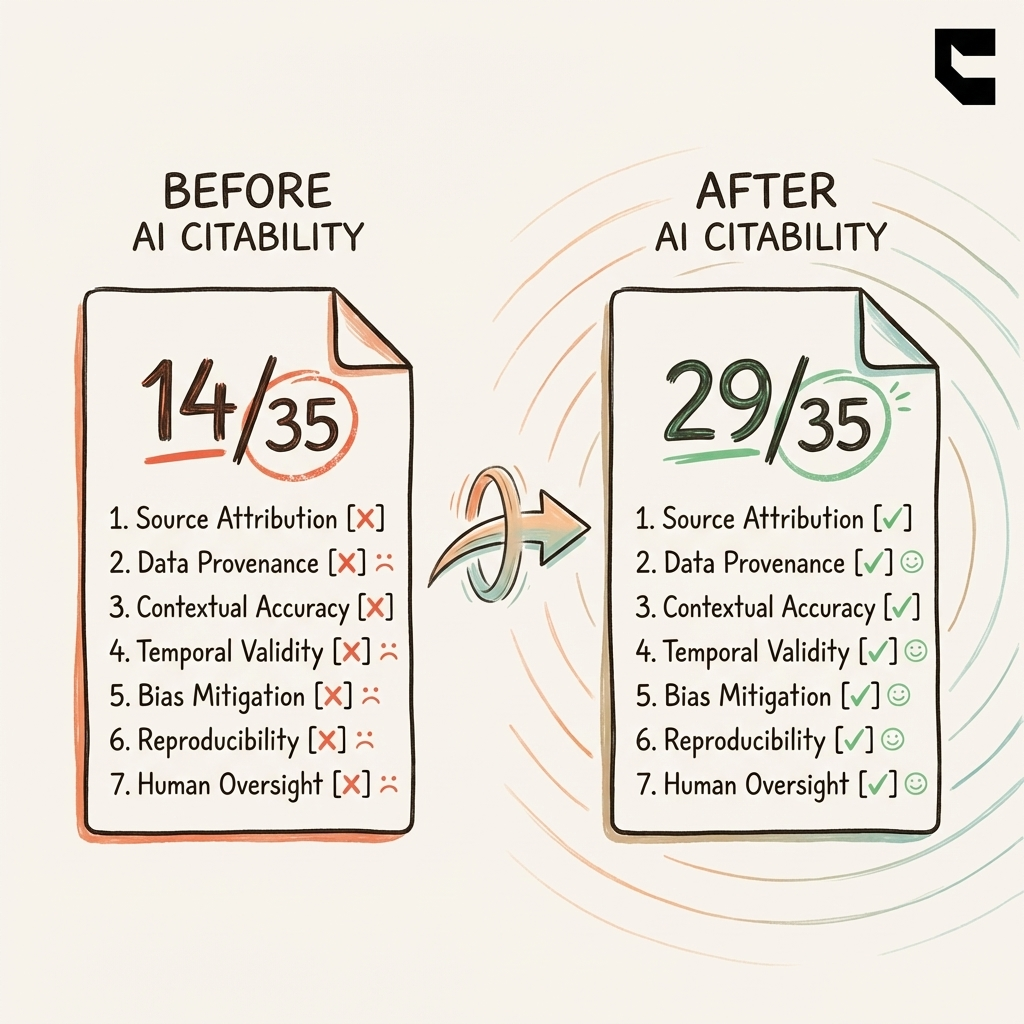

Score each page out of 35 total points. 28+ = citation-ready. 21-27 = needs targeted work. Under 21 = full restructure needed. We use this exact rubric to grade client sites. It removes the guesswork.

How Do You Audit a Page Step by Step?

Select a page, identify target AI queries, test current citation status, score against all seven points, then prioritize fixes by impact.

Step 1: Select the page from your prioritized matrix. Don't pick pages at random. Follow the math.

Step 2: Identify the AI queries it should answer. What would someone ask ChatGPT that this page should be cited for? Write 3-5 natural-language queries. For a CRM comparison page: "What's the best CRM for early-stage startups?" or "Which CRM tools are popular with YC companies?"

Step 3: Test current citation status. Ask those queries across ChatGPT, Perplexity, and AI Overviews. Document results: cited as primary source, mentioned in passing, or absent entirely?

Step 4: Score the page against the 7-point scorecard. Mark each factor 1-5. Calculate the total out of 35.

Step 5: Identify fixes based on the lowest-scoring factors. Entity clarity at 1? Add a clear definition in the first paragraph. Answer formatting at 1? Rewrite every section opener.

A Real Before/After Example

One of our client's comparison pages scored 14/35 on the initial audit. Entity clarity: 1 — the topic only appeared in the H1, never defined. Answer formatting: 2 — answers were buried in the third paragraph. Schema: 1 — none implemented.

We restructured the page completely. Added answer capsules to every section. Implemented Article + FAQ schema. Added a detailed author bio with credentials. Inserted four sourced statistics with named references. The score jumped to 29/35. Within six weeks, the page appeared in Perplexity citations for three of five target queries.

What Content Fixes Drive the Most AI Citations?

Answer-first formatting, self-contained 50-150 word chunks, HTML tables, Q&A patterns, and sourced statistics produce the largest citation improvements.

Answer-First Formatting

Rewrite the opening sentence of every section to directly answer the heading's question in 15-30 words. This single fix produces the biggest citation lift. Context follows the answer. Never bury facts beneath long introductions.

Before: "In today's rapidly evolving digital landscape, many marketers are beginning to wonder about the best practices for structuring content that AI systems prefer to cite..."

After: "AI systems cite content that leads with a direct answer in the first 30 words. Structure every section with the answer first, context second."

Self-Contained Chunks

Break every section into 50-150 word blocks that stand alone. AI extracts chunks, not full articles. Pages built with self-contained sections get cited more often than wall-of-text content.

HTML Tables Over Images

Convert pricing, comparison, or spec data from images to HTML tables. AI can parse tables and cite specific cells. An image of your pricing grid is invisible to every LLM.

Q&A Patterns and FAQ Sections

Add FAQ sections with question headings and direct answers. These map directly to how people query AI. Keep answers concise and factual.

Sourced Statistics

Add 3+ named sources with verifiable data. Statistical content increases AI visibility by roughly 25% according to Semrush's analysis. Named expert quotes and cited research build the authority signals that AI trusts.

Schema and Authorship

Implement Article, Author, FAQ, and Organization schema. Reference our schema markup for AI visibility guide for the full implementation. Add clear author names, bios, credentials, and linked profiles — see our E-E-A-T guide for details.

How Do You Measure the Impact of Your AI Content Audit?

Baseline your pages against four metrics before the audit, re-test monthly, and expect citation improvements within 4-8 weeks of implementing fixes.

Track your progress with our 4-metric framework. Read the full how to measure AI visibility breakdown for deeper detail.

The four metrics:

- Brand Mention Rate — How often does AI mention your brand when asked relevant queries?

- AI Share of Voice — What percentage of AI answers in your category cite you vs. competitors?

- Citation Quality — Are you cited as a primary source or just mentioned in passing?

- Source Diversity — How many different AI platforms cite you?

Baseline your pages before starting the audit. Run your target queries and record the status.

Re-test monthly. AI platforms index and re-evaluate content on rolling cycles. Consistent tracking proves ROI.

Expect citation improvements within four to eight weeks after structural fixes. Schema changes often surface faster — two to four weeks. AI citations average 25.7% fresher than traditional Google results, giving recently updated content a structural edge.

Frequently Asked Questions About AI Content Audits

These are the questions we hear most from marketing teams running their first AI content audit.

How long does an AI content audit take?

A single page takes 15 to 30 minutes to score and document. A full site audit of 50-100 priority pages typically requires two to three weeks, including implementation of fixes.

How is an AI content audit different from an SEO audit?

An SEO audit checks technical health and ranking factors. An AI content audit checks citability — whether AI can extract, trust, and cite your answers. Different tools, different fixes, different outcomes.

Which pages should I audit first?

Start with pages that have the highest combination of organic traffic, conversion value, and relevance to queries people ask AI. The prioritization matrix in this guide helps you rank them.

How often should I re-audit my content?

Re-test citation status monthly. Do a full re-audit quarterly or after significant content changes. AI freshness signals reward consistent updates.

Can I do this myself or do I need an agency?

You can score your own pages using the 7-point scorecard in this guide. The complexity scales with site size — auditing 10 pages is manageable, auditing 200 with implementation across all seven factors is where most teams bring in help.

Conclusion

- 80% of AI visibility wins come from optimizing existing content, not creating new pages.

- The 7-point citability scorecard turns "is my content good enough?" into an objective score out of 35.

- Prioritize by traffic + conversion value + citation status. Don't audit everything equally.

- Measure with the 4-metric framework, re-test monthly, expect results in four to eight weeks.

Pick your top three pages by traffic today. Score each against the 7-point scorecard. The lowest-scoring factors are your first fixes.

Want us to audit your entire content library? Cintra's AI visibility audit scores every page, prioritizes the fixes, and implements the changes that get you cited. Book a free strategy call to see where you stand.